4 Type-Safety Patterns for Production AI — Lessons from Toucan's Backend

.jpg?width=88&height=88&name=PORTRAIT_David_face%20(1).jpg)

David Nowinsky

Publié le 05.03.26

Mis à jour le 19.03.26

5 min

Résumer cet article avec :

LLM integrations don’t have to be piles of JSON strings and “we’ll see what comes back”. In our backend at Toucan, we treat LLMs as typed, observable services—not magic. Here’s what that looks like in practice, and what we’d do again if we were starting from scratch.

Why type safety matters more with LLMs than with REST

With a traditional API, both sides usually agree on a contract. With LLMs, that contract is… “whatever the model feels like returning today”.

If you don’t put a structure around that, you get:

- Stringly‑typed everything – Payloads, tools, errors and states are all

any. - Silent failures – A field gets renamed in the prompt, the model still answers, and you only notice in production.

- Glue code you can’t refactor – One change to a tool or schema ripples through the whole codebase.

At Toucan, our AI assistant runs as a multi‑agent system behind the scenes. The orchestrator coordinates sub‑agents and model calls, but almost everything it touches is strongly typed. The LLM is the only “fuzzy” piece—everything around it is explicit.

A mental model: the LLM as a typed boundary

We think of the LLM as a boundary between two strongly‑typed worlds:

-

Inside the backend: we use strict types and schemas for context, state, tool inputs/outputs, and events.

-

At the boundary: prompts and responses are wrapped in validation and translation layers.

-

Outside: the model can be upgraded, swapped, or tuned without rewriting business logic.

The goal isn’t to “fully control” the model; it’s to make the rest of the system boring, predictable, and refactorable.

In an AI embedded analytics context, this matters especially because the AI operates across hundreds of tenants simultaneously — a type error that leaks the wrong tenant's data is a security issue, not just a bug.

Typed context and state around the agent

Before we even call an LLM, we standardize and type who is calling and what they’re asking for:

- Context: organization, authenticated user, topic, request metadata.

- State: where we are in the conversation and plan (current step, previous tools, intermediate results).

This gives us:

- Compile‑time guarantees that every model call has the required fields.

- Safer evolution: adding a new dimension (e.g. a new topic field) touches a small set of types instead of dozens of ad‑hoc objects.

- Clearer observability: we can log and trace context/state in a consistent way.

From a CTO perspective, this is what makes the system “explainable” to your own team: you can see, in concrete types, what the assistant knows at any point in time.

We also cover this from an architectural angle in our article on scaling from a monolithic LLM to a multi-agent system.

Typed tools, not Ad‑Hoc functions

Tools are where the real work happens: querying data, exploring schemas, planning, rendering charts, delegating to sub‑agents. In our backend, a tool is never “just a function the model can call”.

Instead, each tool has:

- A typed input shape (what the LLM must provide).

- A typed output shape (what the rest of the system can rely on).

- A structured error shape (how failures are surfaced).

Conceptually:

This buys us a few things immediately:

- Safer prompts – We can describe exactly what’s expected (fields, enums, constraints) instead of “give me some JSON”.

- Runtime validation – If the model returns something that doesn’t match the schema, we catch it at the tool boundary and treat it as a first‑class error.

- Refactorable tools – Changing a tool signature is a normal code change, not a risky global search/replace.

The LLM doesn’t need to know our internal types. But our code does—and that’s what counts.

Pattern: “Parse, Don’t Guess” for model outputs

A small but important practice: we never assume the model’s response is correct just because it “looks” reasonable.

Instead, we:

- Ask for structured outputs (usually JSON‑like) aligned with our schemas.

- Parse and validate against those schemas at the boundary.

- Convert validation failures into typed errors that the orchestrator can handle.

This has two side effects CTOs usually like:

- Graceful degradation – A bad model output becomes an explicit “model_output_invalid” error, not a random crash in a downstream tool.

- Better model iteration – When you tune prompts or switch providers, you immediately see where outputs deviate from expectations.

You still need good prompts, but you are no longer fully dependent on them for correctness.

Typed events and observability

Type safety isn't just for inputs and tools; we also apply it to observability:

- Each request gets a trace identifier, propagated through the orchestrator and every sub-agent call.

- Each tool invocation and sub-agent delegation emits a structured event with typed metadata: which agent ran, what the outcome was, and the error kind if it failed.

- Planning steps carry explicit status transitions

(pending → in_progress → completed | skipped)with ISO 8601 timestamps, so you always know where execution stopped.

Type safety isn’t just for inputs and tools; we also apply it to events:

- Each request gets a trace identifier.

- Each phase (classification, planning, execution, response) emits typed events.

- Tool calls and sub‑agent invocations carry structured metadata (duration, outcome, error kind, context).

This means:

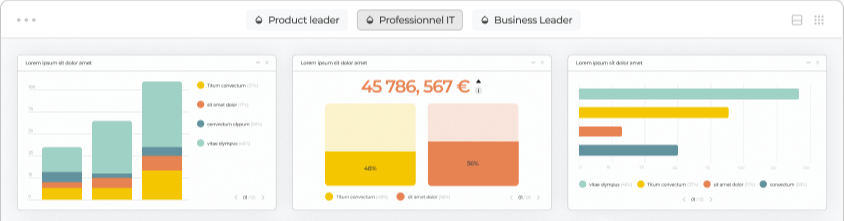

- Dashboards and alerts can be built on top of stable, typed fields, not free‑text logs.

- It’s easier to answer questions like “how often does the query builder fail vs the data explorer?” or “did the last change increase validation errors?”

- You can replay real traces in non‑production safely, because you know exactly what shapes to expect.

In practice, this is where type‑safe LLM integrations stop being a “nice TypeScript bonus” and start looking like real infrastructure.

In practice, we route this through PostHog and Langfuse, which means:

- You can ask "how often does the query builder return a validation failure vs the data explorer?" and get a real answer, because failures are always

{ status: "failure", diagnostics: string[] }— not a caught exception swallowed in a log. - When you change a prompt or swap a model, you immediately see where outputs start deviating from expected schemas.

This isn't a full typed event bus, it's simpler than that. The payoff is that your observability data has the same shape discipline as your code.

Practical lessons we’d reuse

If we were starting again tomorrow, we’d keep these principles:

- Treat the LLM as a typed boundary. Everything around it (context, state, tools, events) should be boring and well‑typed.

- Give tools real contracts. Inputs, outputs, and errors should be explicit types, not loosely defined blobs.

- Validate aggressively at the edges. Parse and check model outputs before they hit the rest of your system.

- Instrument with typed events. Observability data should be as structured as your APIs.

Type‑safe LLM integrations won’t make your models perfect. But they will make your systems more predictable, debuggable, and evolvable—which is ultimately what matters for a production AI backend.

How these patterns connect to broader error handling strategy is covered in our article on 5 lessons from running a multi-agent system in production.

If you’re starting now

If we were advising a team building their first LLM backend today, we’d suggest:

- Bake in types from day one. Define schemas for context, tools and events before you wire any prompts, instead of retrofitting types around “whatever the model returns”.

- Keep prompts thin, code thick. Push branching, validation and orchestration into typed code; use the model for judgment and language, not for control flow.

- Standardize tool contracts early. Reuse a small set of input/output patterns across tools so adding a new one doesn’t mean inventing a new shape every time.

- Treat observability as a feature. Add trace IDs, phase/tool metrics and checkpoints alongside your very first agent, not as a later “platform” project.

About Toucan

Toucan AI is an AI-native embedded analytics chat. We help SaaS companies integrate analytics directly into their product. Thanks to a semantic layer and natural language question-to-chart capabilities, users can simply ask questions in plain language and get instant visual answers, without writing a single query.

For product teams, this means faster shipping, simpler integration, and an analytics experience that drives higher adoption, better retention, and new revenue opportunities.

For the product context: Toucan is an AI-powered embedded analytics platform, and these patterns are what make it reliable at scale.

You can request your access :)

.jpg?width=112&height=112&name=PORTRAIT_David_face%20(1).jpg)

David Nowinsky

David is the Chief Technology Officer (CTO) at Toucan, the embedded analytics platform built for SaaS companies that want to deliver seamless, impactful data experiences to their users. With deep expertise in software engineering, data architecture, and product innovation, David leads the technical vision behind Toucan’s mission to make analytics simple, accessible, and scalable. On Toucan’s blog, David shares insights on embedded analytics best practices, technical deep-dives, and the latest trends shaping the future of SaaS product development. His articles help technical teams build better products, faster—without compromising on user experience.

Voir tous les articles